NVIDIA GTC 2026: From GPUs to AI Factories - What Vera Rubin Really Means for Builders

GTC 2026 was the week NVIDIA stopped selling GPUs and started selling AI factories -here’s what that shift means for AI, ML, and data engineers now.

Hello everyone,

Finally, spring is here, few sunny days here in England (I don’t want to jinx it though). Overall I am feeling happy, trying to get back to my running habit now. The disappointment I carried last week though was not being able to attend the NVIDIA GTC - I have too much going on to make a trip to the US right now.

Anyway, I have been following all the updates. I have collected the top things you should know in this newsletter.

Let’s have a look.

Folks, GTC 2026 was the week NVIDIA stopped selling us GPUs and started selling us AI factories - hardware, agents, and even token budgets included. Lovely stuff.

For years, GTC keynotes have been about bigger chips, more FLOPs, and eye‑watering benchmarks. This year was different. Mr. Jensen Huang’s message was clear: the center of gravity is moving from individual accelerators to full‑stack “AI factories” that ingest data on one end and ship intelligence on the other.

1. At the heart of that story is Vera Rubin.

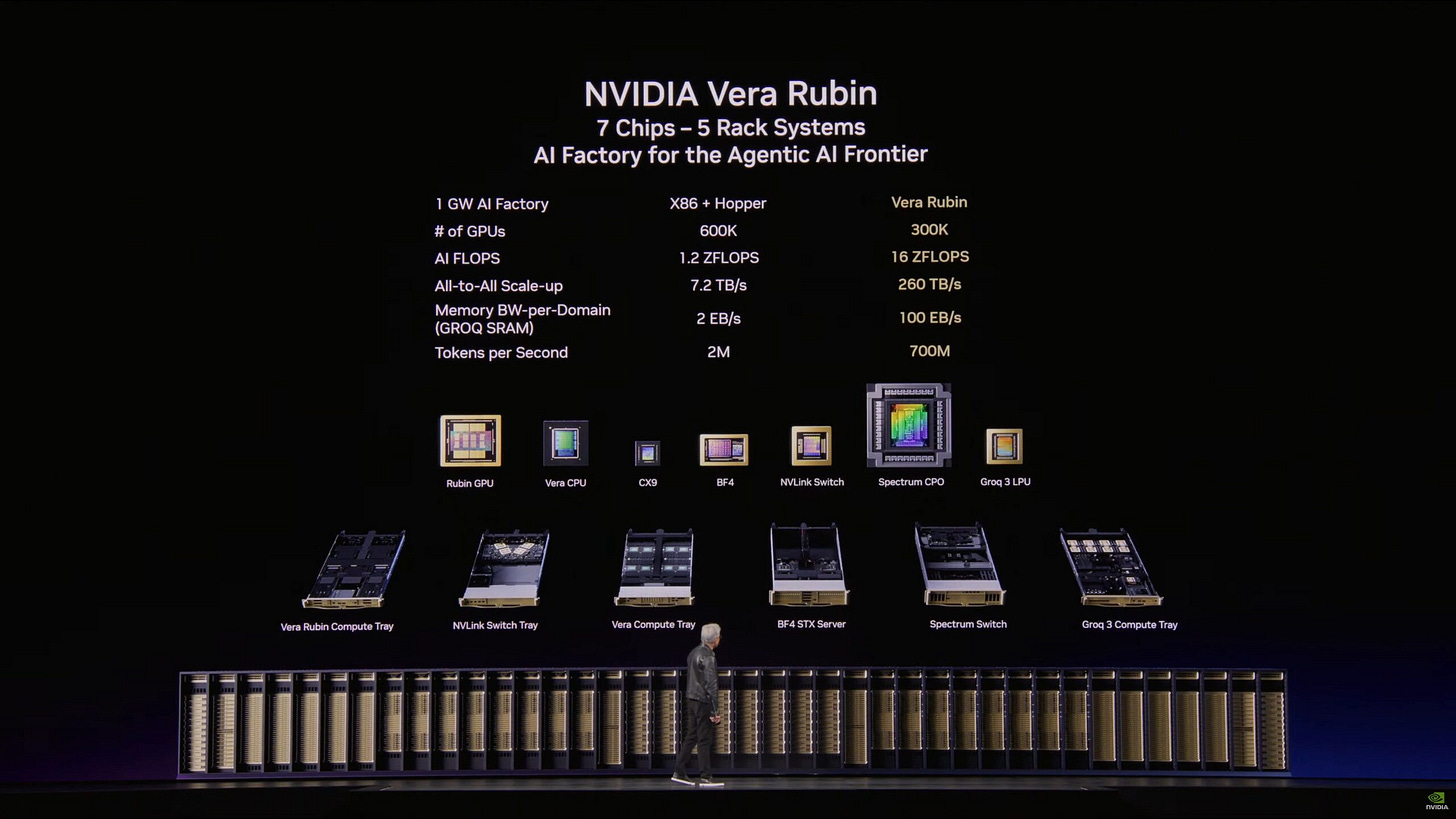

Vera Rubin is an integrated platform: seven specialized chips, multiple rack‑scale systems, a supercomputer, orchestration software, and a roadmap to the next platform, Feynman. If Blackwell was the engine, Rubin is the entire plant. You don’t just get more TFLOPs; you get an opinionated way to build and run agentic systems at scale.

That framing matters if you’re an AI, ML, or data engineer.

Instead of asking “How do I get access to H100s or B100s?”, the real question becomes “Where will my AI factory live, and what will it produce?” That’s a very different conversation about architecture, data, and economics.

2. The trillion‑dollar AI factory build‑out

Mr. Huang also did something subtle but important: he didn’t talk about AI as a feature; he talked about AI as infrastructure. The combined order pipeline he referenced for Blackwell and Vera Rubin runs into the trillion‑dollar range over the next few years. Whether you believe in the exact number or not, the signal is unmistakable.

We’re no longer in the “let’s try a model” phase. We’re in a multi‑year build‑out of AI plants in the same way we once built data centers, clouds, and mobile networks. That means:

Inference economics become a first‑class design constraint.

Token budgets will be as real as laptop or SaaS budgets.

Capacity planning for AI will look more like power and networking planning than like a one‑off POC.

If you’re building products, this is your wake‑up call to treat AI like infrastructure, not a sprinkle of magic dust at the end of a roadmap.

3. The “agentic moment” is now official

Another clear shift: NVIDIA is leaning hard into agentic systems.

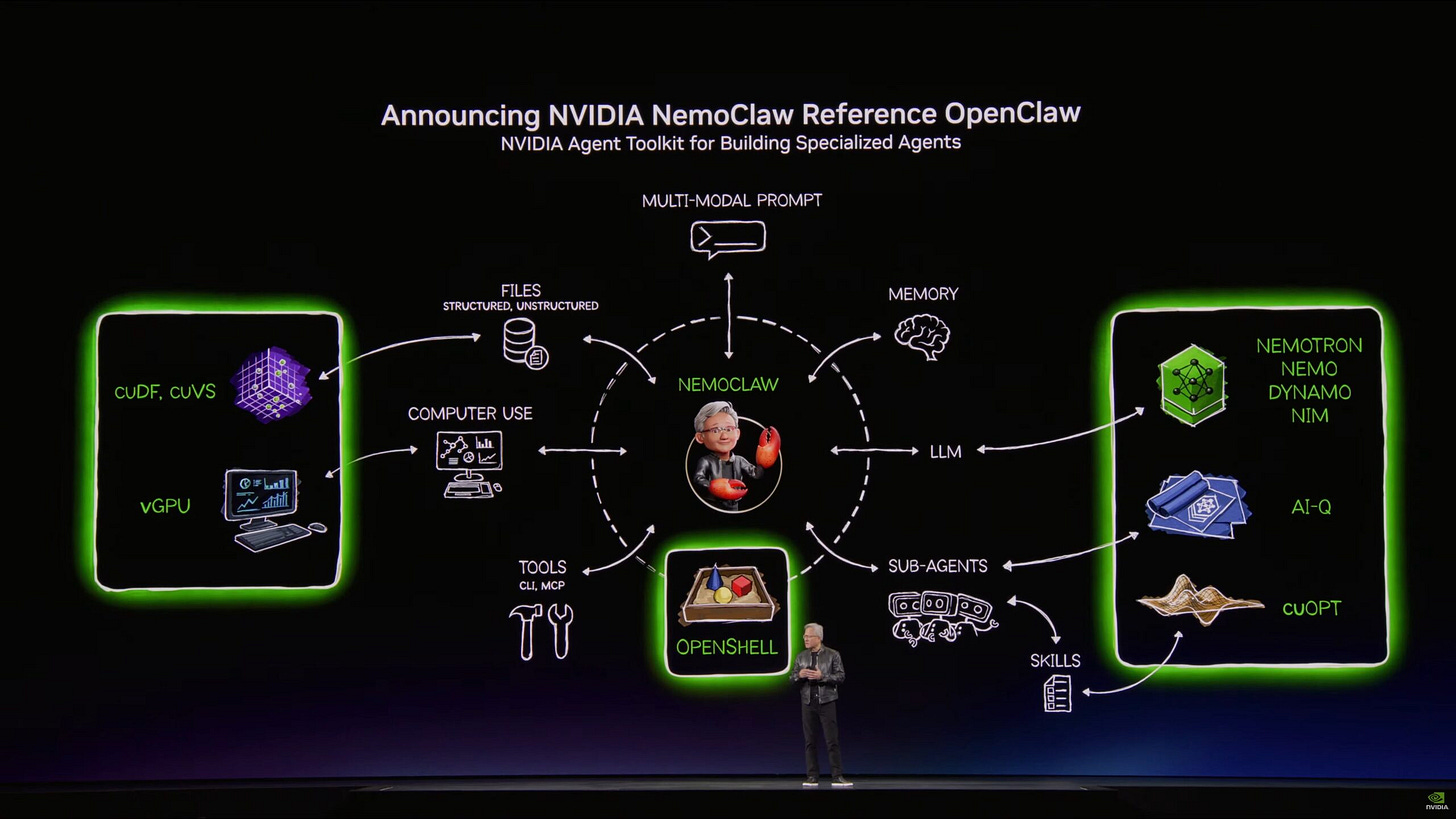

NemoClaw and its surrounding tooling were positioned as core to how enterprises will build with these new platforms. The pattern is no longer “one giant model behind an API.”

It’s:

Tool‑using agents orchestrating calls into models and services.

Multi‑step workflows that reason, plan, and act.

Customization and fine‑tuning on your own data, running on your own slice of an AI factory.

Practically, that means agent orchestration, evaluation, and safety move from hacker‑weekend topics to board‑level concerns. It also means AI and data teams who understand tools, context, and control flows will be disproportionately valuable.

4. Hardware envy and the pace problem

There’s a less comfortable undercurrent to all of this: hardware obsolescence.

If you invested heavily in last year’s “AI factory,” GTC 2026 probably gave you a twinge of regret. Rubin‑class systems move the goalposts again. Throughput, efficiency, network architecture - everything just jumped.

Most teams won’t be able to rip and replace every cycle. So the question becomes: how do you architect for optionality?

(By the way, this applies to any production-grade AI system architecture)

A few practical edges:

Design around portable abstractions (containers, standard runtimes, open protocols), not vendor‑specific stuff.

Separate concerns: data platform, model platform, agent layer. You want the freedom to swap pieces as the hardware evolves.

Focus on investments that survive GPU generations: data quality, evaluation, governance, and product integration.

The platforms will keep getting better.

Your moat will be how quickly you can adapt your stack to whatever comes next.

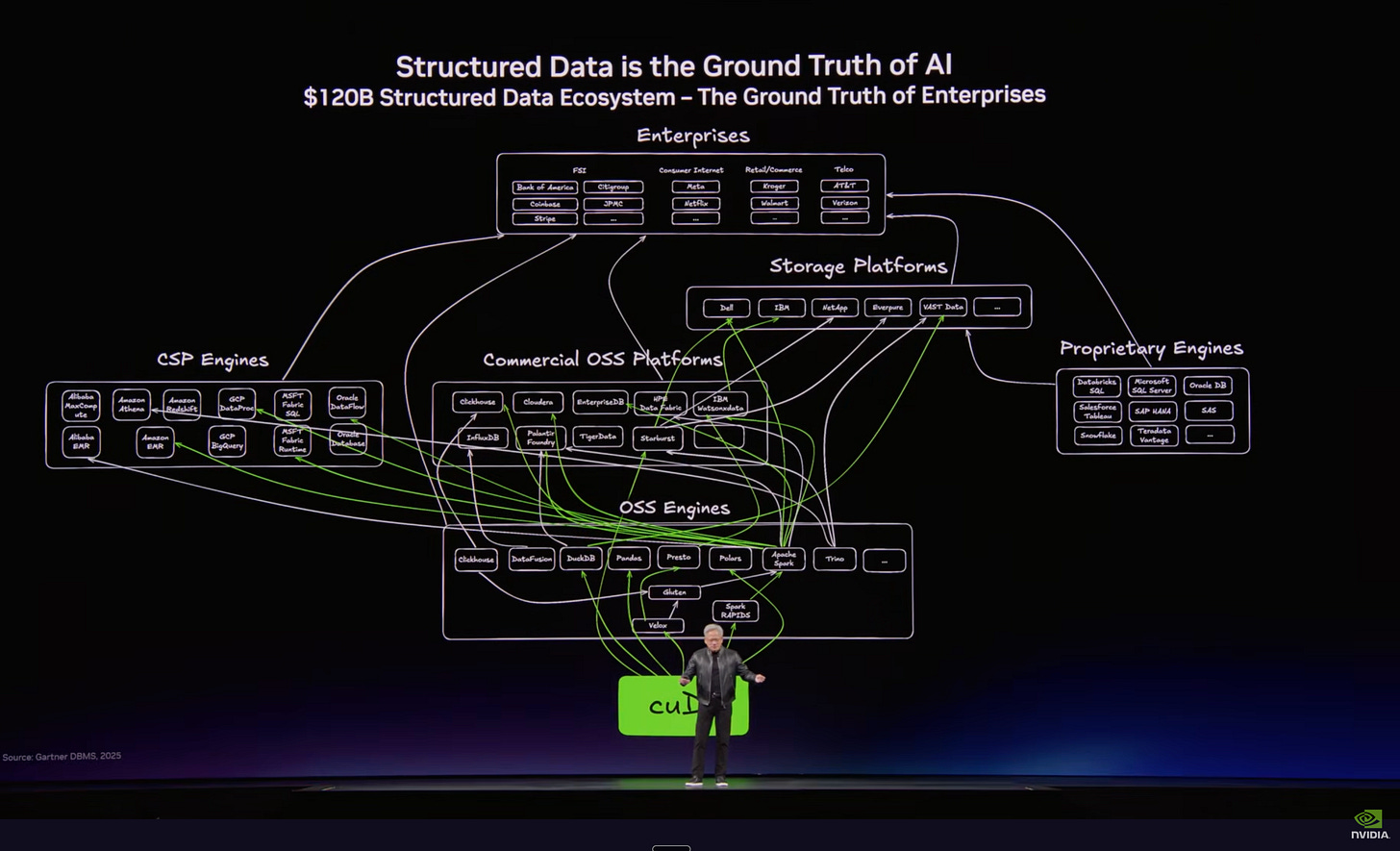

5. Data and the physical world reclaim the spotlight

One of my favorite subplots from this GTC is that structured data, simulation, and physical AI quietly stepped into the spotlight.

DLSS 5 and the new wave of neural rendering aren’t just about prettier video games. They’re about real‑time, photorealistic, physics‑aware environments you can use to train and validate agents. Combine that with better edge hardware and you get a serious push toward robots, industrial agents, and AI systems that interact with the messy real world.

Check this out:

For data people, the implication is simple: tables, events, and logs are still the fuel. For AI engineers, simulations and digital twins are becoming as important as datasets. For product teams, the bar for “realistic” behavior in AI‑powered experiences just went up.

Why this GTC matters for you

If you strip away the marketing, GTC 2026 is telling builders three things:

The unit of competition is shifting from model to factory.

Agentic systems will be the default pattern for serious AI products.

The compounding advantage still comes from data, evaluation, and integration - not just chips.

If you’re in AI, ML, or data, your edge will come from how fast you can align your architecture, practices, and skills with that reality.

What you can actually do next (without a Rubin cluster)

Most of us are not spinning up NVIDIA Vera Rubin systems next quarter. The realistic move is to upgrade how you think, learn, and design.

Here are four learning objectives you can pursue right now:

Think in “AI factories,” not just models

Map your current stack - data collection, feature engineering, model training, deployment, monitoring - against the AI factory idea. Where is data still manual? Where is evaluation an afterthought? Where are agents bolted on instead of designed in from the start?Get comfortable with inference and token economics

Even if you’re using cheap or free models, start tracking tokens‑per‑feature and cost‑per‑request. A simple spreadsheet or dashboard that shows “this feature costs X per 1,000 users” will change how you design prompts, choose models, and argue for optimizations.Practice building small, robust agentic flows

Use open‑source frameworks or your favorite LLM stack to wire up basic agents: retrieval + tool calling + simple planning. Focus less on exotic models and more on reliability, evaluation, and clear boundaries for what the agent should and shouldn’t do.Re‑center your work on data, evaluation, and simulation

Treat your tables, logs, and events as the core asset, not an afterthought. Experiment with offline evaluation harnesses. If your domain touches the physical world, explore simple simulation or synthetic scenarios - even if you’re not using Omniverse‑grade tools yet.

This is too long already folks. I will stop here.

Thank you for reading, and please leave your comments, feedback. Get in touch, tell me what you are leanring and what you would like to know more of.

P.S. If you’re new here - welcome 🎉. AgentBuild is a community of practitioners working through the real challenges of getting AI into production inside large organisations. Every week I share practical, grounded thinking from the people doing this work at the sharp end. The goal is never theory - it’s always: what can you use Monday morning.

Ask your friends to join.

More valuable content coming your way.

Thanks for reading agentbuild.ai! Subscribe for free to receive new posts and support my work.

Vera Rubin is the density story nobody’s tracking closely enough. 144 GPUs in a single rack changes the math on inference latency and cooling budgets simultaneously. The real question for builders: does your orchestration layer keep up with the hardware cadence, or are you bottlenecked at the software layer?