The High Agency Engineer Will Win the AI Era. Here's What I'm Seeing in the Field.

My job gives me an unusual view. I get to sit inside a lot of organisations and watch how engineering teams are actually responding to AI. Not the conference version. The real version.

I’m seeing something in the field right now that is genuinely opening my eyes.

I’m lucky. My job puts me in front of a lot of engineering teams across a lot of organisations. Some are moving fast. Some are moving slow. And I get to see both. Not from a distance, up close, in the actual conversations where decisions get made.

What I’m watching is a quiet split happening inside engineering teams. And I think it matters for anyone thinking about where this profession is heading.

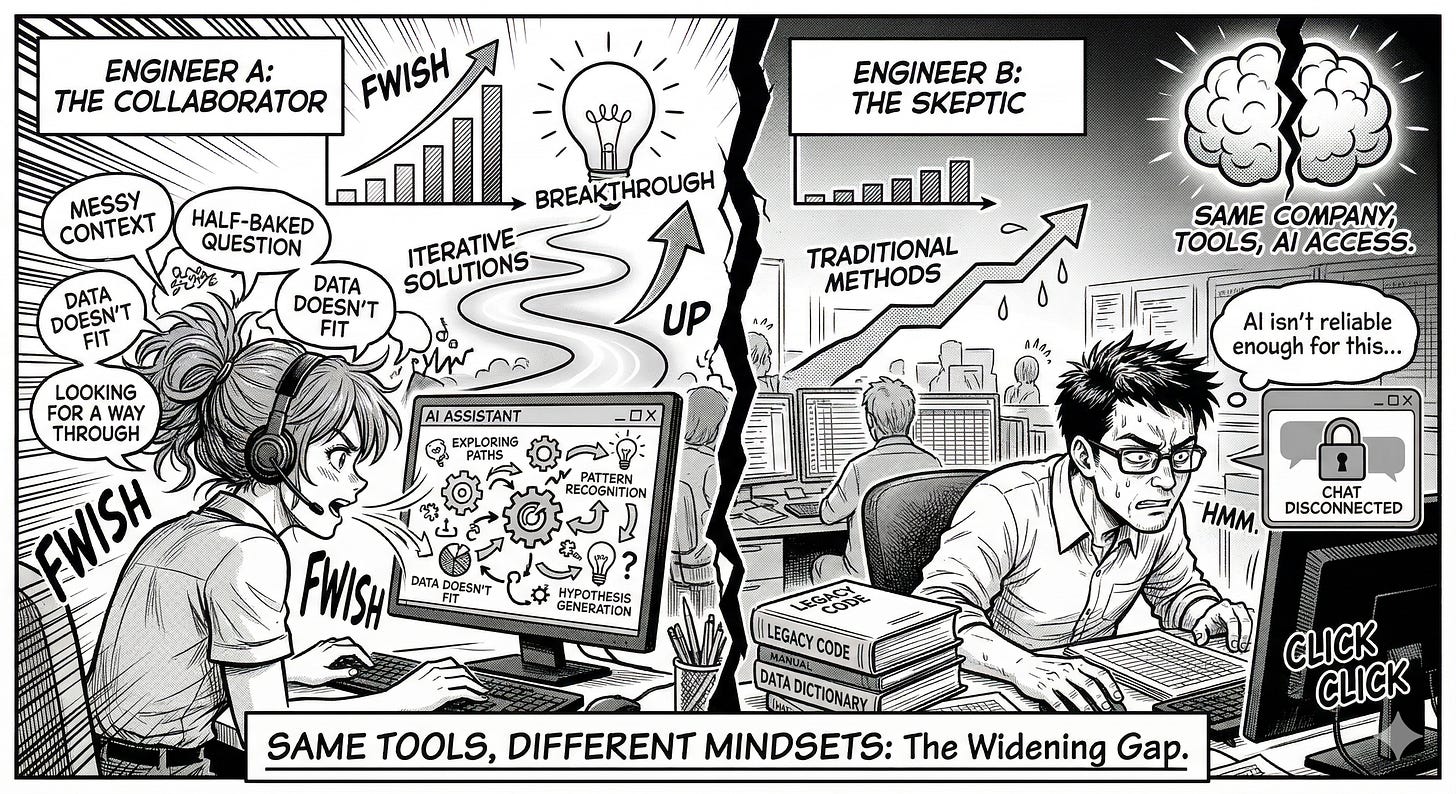

Two engineers. Same company. Same tools available. Same access to AI. Completely different outcomes.

One of them, when they hit a hard problem, opens a chat window and starts working through it out loud. They dump in the messy context. The half-baked question. The data that doesn’t quite make sense yet. They’re not looking for autocomplete. They’re looking for a way through.

The other one says “AI isn’t reliable enough for this.” And goes back to doing it the slow way.

I’ve watched this play out across banks, fintechs, and large regulated enterprises. And the gap between these two engineers is only getting wider.

What high agency actually looks like

I was working with a team recently trying to make sense of a large pile of unstructured documents. Audit logs, policy docs, historical reports. This kind of work that normally takes weeks of someone’s time.

One engineer on the team didn’t wait to be told how. She had no prior experience with the specific tooling. But she sat down, broke the problem into pieces, and used AI to work through each one. By end of day she had something working. Not perfect. But working.

She didn’t have a playbook. She made one.

That’s what high agency looks like in practice. Not waiting for a process document. Not waiting for someone to say it’s approved. When they hit a wall, the first instinct is to figure out what question to ask - not explain why the wall is there.

What the resistance sounds like

I want to be careful here. The engineers pushing back on AI are not lazy. Many of them are the most experienced people in the room.

But the resistance has a pattern.

“It hallucinates too much for our use case.”

“Security hasn’t signed it off yet.”

“The outputs aren’t consistent enough to trust.”

“This is hype, let it settle.”

Some of these are valid. I work in regulated environments. I understand the constraints.

But what I notice is this. The engineers saying these things have usually not given AI their hardest problem. They’ve given it easy tasks, watched it stumble, and concluded it isn’t ready. They’re evaluating a tool they haven’t really pushed.

The high agency engineers hit the same limitations. They just treat them as constraints to work around, not reasons to stop.

There’s something underneath the resistance

I think it goes deeper than technology skepticism.

A lot of experienced engineers have built their identity around already knowing the answer. They’re the person people come to. The one who’s seen this before.

AI is uncomfortable for that identity. Because the value is shifting. It’s moving away from already knowing - toward knowing how to ask. That’s a different skill. And it asks you to be a beginner again, at least partially.

The engineers I see thriving have a looser grip on what they already know. They’re curious before they’re skeptical.

They pick the tool up before they critique it.

One practical thing

Ask yourself honestly: when did you last give AI your genuinely hardest problem?

Not “summarise this document.” or “tidy up this function.”

The real hard thing. The one you’ve been circling because you don’t quite know where to start.

I’ve seen engineers use AI to compress weeks of analysis into a day. I’ve seen it catch patterns in production failures that a team had been chasing for months. I’ve seen it unlock a business conversation that had been stuck for a quarter - just by helping someone structure their thinking clearly enough to explain it.

None of that happened because the technology was perfect. It happened because someone decided to figure it out.

The job of an engineer is changing. I’m watching it happen. The ones adapting aren’t the most experienced or the most technical. They’re the ones most willing to stay curious.

That’s the only practical advice I have.

One question before you go - what's the most interesting thing you've seen an engineer do with AI that nobody is talking about yet?

Hit reply and tell me.

Talk soon,

Sandi

P.S. If you’re new here - welcome 🎉. AgentBuild is a community of practitioners working through the real challenges of getting AI into production inside large organisations. Every week I share practical, grounded thinking from the people doing this work at the sharp end. The goal is never theory - it’s always: what can you use Monday morning.

Ask your friends to join.

More valuable content coming your way.

Thanks for reading agentbuild.ai! Subscribe for free to receive new posts and support my work.